Introduction

Testing is an important part of software development, as it helps the team understand the behavior of the program before it is released. Manual testing — trying a bunch of things to see what happens — gets tiresome quickly, and so the temptation is to not do it very often. A better way to learn as much about the behavior of the software as quickly and painlessly as possible is to use an automated approach.

The simplest form of automated testing is regression testing — seeing if the software does the same thing it did last time it was presented with the same input scenario. Changes detected by regression testing can then be reviewed, to see if they were caused by intentional improvements, or are “regressions” in quality that should be corrected before release. The easier it is to run automated regression tests that have good “coverage” of the codebase, the more “freedom of action” programmers have to make changes without fear of breaking things.

While automated regression testing is often easier said than done, xLights does offer some obvious opportunities. Rendering is an example of something that is complicated enough to break, where active development is desired, and where automated regression testing is straightforward enough. Why? Because we have:

- An input we can capture — the .xsq sequence file (plus the layout and other show folder contents)

- An output we can capture — the .fseq output file

- Means to drive the program externally for integration into an automation scheme — various HTTP commands for batch render and the like

Approach

Correctness Testing

Correctness testing is quite straightforward:

- Start with a sequence.

- Make sure the output render looks good.

- Save it as the “known good” or “expected” output.

- In the future, compare the results of render to the “expected” output to see that correctness is maintained.

- If there is a difference between the expected output and a test result, establish if the change was good / intentional, or bad / accidental. If the former, update the expected output, if the latter, fix whatever broke.

There are modest technical and practical concerns here, all quite surmountable.

The first thing is to have a suite of sequences with expected outputs. A good correctness test suite has a lot of “coverage” – exercises all the parts of the code – without taking an excessive amount of time to run, and without being subject to false positives, “noise”, or “churn”. Said another way, the tests should only test what they intend to test, and not be brittle and detect differences when other things change. This is the part where someone has to do real, hard work. I have started what I call the “Supercoverage Test Set” (SCTS)… it hits most of the effects and their parameters a few times (for gaps, see “Future Work” below), and runs in single digit minutes. Of course, “Supercoverage” here may as well mean “superficial coverage”, but it has already detected several things (See “Results” below).

Another thing is to keep the test suite in version control (e.g. github) so that tests and results are not lost, so that history is kept, and so that many people can access it. (Among other reasons.)

Practical considerations arise. .fseq files are not great because:

- They’re a bit big.

- They contain unique IDs and stuff that change for no reason at all, so there is noise.

- They’re binary, and compressed, and therefore inscrutable when it comes to telling how they changed. Because of the way compression units are done, they could even change without the uncompressed contents changing.

The solution to that was to develop a tool, written in python, fseqFile.py. (For details, see Tool Details below.) To fix issue #1, the tool calculates a summary, the CRC of the .fseq file uncompressed contents, which is much smaller than the .fseq file itself, but will almost certainly change when the contents change. To fix issue #2, the tool calculates the CRC only on the part of the files we care about, and breaks the CRCs down into sections based on model and timing region. To fix issue #3, the CRC report is saved in JSON, so that it can be interpreted in a reasonable way by general diff tools, and in an even better way by a special-purpose comparison program. All of these have been written and are working well.

Consistency Testing

Given the limitations of a purpose-built test set, it makes sense to try the regression tests on suites of real-world sequences, such as people’s shows, vendor offerings, google drive, and so on. The issue here is that we don’t know if xLights is currently rendering them correctly or incorrectly. Still, it is possible to run the suites repeatedly to see if anything changed from one version to the next, so I took this approach on my own 2021 show folder (2022 is coming soon).

During the course of consistency testing there were several technical challenges encountered:

- There are cases where xLights intentionally doesn’t give the same answer, basically anything random, like the placement of circles in the circles effect, text that depends on the date, and so on. (See Challenges and Workarounds.)

- There are bugs in xLights that cause renders to be inconsistent from one run to the next. (See Results.)

In addition to the technical challenge, there may be issues with capturing the test suite for others to use. (For example, my show folder contains copyrighted vendor sequences, copyrighted songs, and so on, so we could not use it as an official test suite.) It is also large, and highly skewed toward certain effects (like video effects that PixelProDisplays and some other vendors overlay on the source effects to ensure a consistent experience for their customers).

Performance Testing

Performance testing is an obvious addition to most forms of regression testing, including that outlined above. All that is needed is to collect how long each render takes. There are some considerations:

- Tests need to run long enough that they are not subject to noise of startup, teardown, etc.

- The machine used for comparison points has to be the same, or comparable, otherwise you would not get the same timing consistently.

- As effect rendering time involves mélange of code routines, performance represents an aggregate of everything in the sequence. Performance changes for effects that are not represented sufficiently in the test suite would not be detected. To mitigate this, per-effect performance test sequences would be helpful.

Results on my 2021 show folder show reasonable consistency across xLights versions from 2022 (See results below). Collection of performance data and generation of spreadsheet files is automated by a tool, see “Tool Details” below.

Challenges & Workarounds

The first challenge with correctness and consistency testing using the “identical output files” / CRC approach outlined above is with effects that are intentionally random. This includes strobes, shapes, circles, lines, twinkle, meteor, snow, and several others with completely random placement, plus effects with random modes such as VU meter and tendril. The approach for solving this was to introduce a per-effect pseudo-random number generator (PRNG) state, which is consistently seeded by taking the effect, the start time, the model, and other parameters so that each effect gets unique “random” values, but there is consistency from run to run. There are a few exceptions (see future work below), but changes are mostly made and available on a branch. (For the curious, the PRNG used is xoroshiro128+, which is state-of-the-art.

The second approach to consistency is to disable unstable effects within the sequence; xsqFile.py can do this (see the Tool Details section below). While it does make the sequence work, obviously the downside is that the tests become completely blind to issues in the disabled effects.

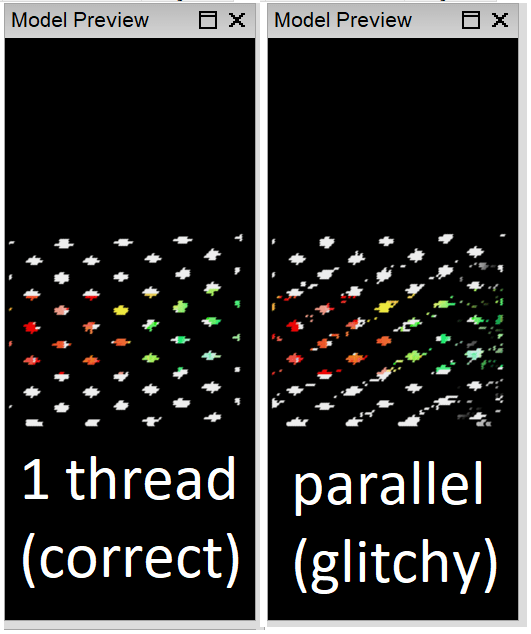

A second source of instability in the render result is caused by parallel implementations that may render overlapping objects in different orders. For example, if meteors overlap each other, whichever rendered last will show, and this could differ if the meteors are rendered in parallel. The best solution here is to improve the render algorithm to make it consistent, but the short-term workaround is to disable parallelism on the testing branch, or disable the affected effects if code changes are not possible.

A final source of instability is layouts with nondeterministic behavior. Using the same node twice in a submodel or group is an invitation for inconsistency, and so layouts must be scrubbed with “check sequence” and such glitches removed before they are suitable for inclusion in the correctness or consistency test suites. (For performance tests, this likely does not matter.)

Results

Sometimes, just creating a test suite with extensive coverage means you could be the first person to use a feature in a while, and you might encounter some things that are broken. Here are some things I found while creating the supercoverage tests:

- Crash in single strand FX when a lot of color boxes are checked (#3729)

- Value curve for ripple rotation not working (#3726/#3727) (Fixed 2023.04)

Because I just added the test suite, and it took a lot of work to make the random number generators stable to get consistent results, you can’t expect stable results from backtesting old versions. It is therefore somewhat difficult to tell what kind of past changes would have been caught by automated regression tests, but here are some examples I found by trying:

- The maximum radius of a fan effect was changed in 2022.24; based on how value curves work this caused changes in how existing effects render.

- 2022.21 saw some changes in how “garlands” is applied to a group with buffer style “default model as pixel”. Some butterfly effects also changed at this time. Not sure what caused the change and if it was intentional or not.

- The Fractal/Ivy setting on the wave effect was broken (between 2022.13 and 2022.19, but has since been fixed so I did not narrow it down to an exact change).

- There were changes to piano and music at some point between 2021.39 and 2022.13.

- And several more, but mostly they were new features that were introduced, so running them on older versions that didn’t include the features naturally produced different results.

While it is hard to run the consistency suite with so many random effects out there, consistency testing did find a couple of threading problems in xLights:

- Heap corruption crash in text effect (#3728)

- Crash in shape effect with unicode characters as shapes (not yet filed)

- Parallelism in moving data from nodes to render buffer causes poor quality. (This issue, #3740, is fixed in 2023.04.)

- There are instabilities in meteor, twinkle, spirals in 3D mode, and several other effects, however they have not (yet) been seen to produce awful render quality like that seen in warp.

Performance testing was conducted on my 2021 show. (Backtesting the 2022 show is hardly sensible, as it uses effects that were not present in versions prior to 2022.19). In aggregate, render performance improved slightly in xLights releases from tail end of 2021 to date. Many sequences improved, and many stayed the same. Currently, there has not been enough testing to provide visibility into what specific code commits caused performance to change, but an effort to track this, at least going forward, is in the future plans.

Tool Details

xlTest.py

This is the main regression test driver script, capable of executing the majority of a regression test cycle, depending on which command-line options are specified. It uses capabilities from many of the modules below, should it be desired to separate the duties.

For either a single .xsq file or a suite (directory) it will, optionally:

- Start / Stop xLights

- Switch to the show folder

- Perform the renders

- Calculate the CRCs and write to resulting summary files. It will do this at the model+timing level, but only if requested (by option).

- Compare the summaries to the baseline and create reports of differences

- Store the performance results

To do these things, xlTest.py needs to know paths to the xlights binaries, suite show folder, xsq files, expected CRC summaries, and output directories.

See the command-line help for the exact syntax.

perf2xlsx.py

This tool will combine the performance results from multiple run directories into one .xlsx spreadsheet, making one row per sequence and one column per xLights render run tested. The command line takes the output file name, and a list of input perf_report.json files; see the command-line help for details.

xlDo.py

This is my wrapper for making xlDo or other HTTP calls to xLights. In this context, its subroutines are used for starting xlights, switching show folders, and rendering sequences, nothing else.

fseqFile.py

This contains the subroutines for reading .fseq files, decompressing them, and calculating CRCs. With no other information, it can calculate CRCs globally and by frame. Optionally, it will read controllers and models from the show directory, allowing CRCs to be calculated per model, and it can load timing tracks from an .xsq file to calculate CRCs by timing interval, or timing interval + model combination. It can be run as a standalone program to produce a JSON summary of a .fseq file; see the command-line arguments produced by the help.

xsqFile.py

This contains logic for parsing .xsq files, and is used as a library for some of the other tools. It is not a complete implementation, but is able to get information about timing tracks, iterate through effects, and disable effects. Run from the command line, it will go through a folder of .xsq files and produce new ones with the unstable effects disabled; this can be done in two modes, one reflective of unstable effects in xLights as of 2023.02, and another reflective of the development branch where the attempt is being made to stabilize all render results. See command line help for details.

compareFseqCRCs.py

This contains logic for comparing JSON CRC summaries produced by fseqFile.py. It is used as a library by the main test driver, but can also be used standalone to compare two sequence summaries. See the command-line arguments for details.

Future Work

Making a determination of the effect of code changes on “typical” performance requires a test suite that is representative of “typcial” workloads. Whether we have to build that, can use gdrive stuff, or can get help from sequence vendors is an open investigation area.

There are many code changes on an xLights fork to merge in to get the deterministic behavior on master. Merging these would require some additional validation (performance, for example), and agreement from the xLights primary committers. After that, it would be possible to integrate the tests more deeply into the development process, so that they run in the background and keep us all safe from unintended changes.

One of the straightforward things to chip away at is expansion of the unit test suite, which currently lacks (at least) the following things:

- DMX, Servo, Glediator effects

- More thorough tests on shaders, faces, and states

- Testing of single strand FX

- Text effects with string substitutions

- Larger models – this would be essential for per-effect render performance testing also

- Roto-Zoom

- In/Out transitions

- Morph

- More thorough tests of render styles and other things on that tab

- Chroma Key, Brightness, Contrast, HSV adjustment, Sparkles Reflect Music

- Handling of a “rendered for” date for text effects and shaders

There are some things are still not deterministic on the development branch:

- Color palettes set to random blend

- Meteor, twinkle, and kaleidoscope (at least) have nondeterministic parallelism behavior on large renders

And, most obviously, automated testing should extend to aspects of xLights (and xSchedule) beyond rendering.